Source: John Lund / Getty

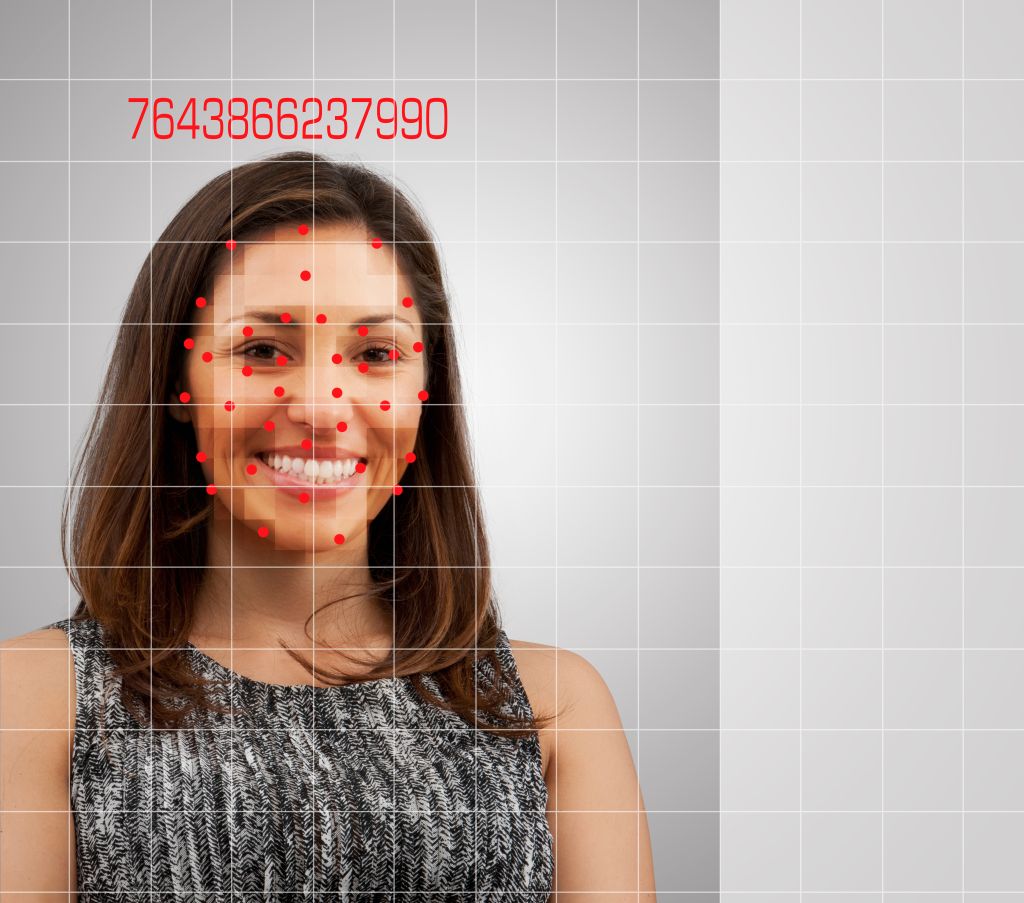

Coded Bias, the new Netflix documentary, explores racist technologies, including facial recognition and algorithms is one to watch.

The film opens with Joy Buolamwini, an MIT researcher who uncovered racial bias in several major facial recognition programs. Three years ago, Buolamwini found commercially available facial recognition software had an inherent race and gender bias. In Coded Bias, Buolamwini shares how she discovered the issue. Looking into the data sets used to train the software, she discovered that most of the faces used were white men.

Buolamwini’s story serves as the starting point for discussing discrimination across various types of technology and data practices. Coded Bias documents several examples of how technological efficiency does not always lead to what is morally correct.

One such incident involves neighbors in a Brooklyn building where the landlord tried to implement a facial recognition software program. Tenants of the Atlantic Towers Apartments in Brownsville sued to prevent what they called a privacy intrusion.

The documentary also highlighted efforts overseas to address issues with technology racism. In one scene, police in the U.K. misidentified and detained a 14-year-old Black student relying on facial recognition software. Police in the U.K. stopped another man from covering up his face because he did not want the software to scan him.

In just under 90 minutes, Coded Bias breaks down the complexity of technological racism. Racism in algorithms, particularly when used by law enforcement, is increasingly getting attention. While several cities banned the use of such software, Detroit continued to move forward.

In June of last year, Detroit Police Chief acknowledged the software misidentified people approximately 96 percent of the time. Within three months, the city council renewed and expanded its relationship with the tech manufacturer ignoring the glaring error.

The New York Police Department previously claimed to have limited use of software from Clearview AI. But a recent investigation from Buzzfeed showed the NYPD was among thousands of government agencies that used Clearview AI’s products. Officers conducted approximately 5,100 searches using an app from the tech company.

Facial recognition and biased data practices exist across multiple sectors. Algorithms and other technology can take on the flawed assumptions and biases inherent in society. In February, Data 4 Black Lives launched #NoMoreDataWeapons to raise awareness about using data and other forms of technology to surveil and criminalize Black people.

Similarly, MediaJustice has organized around equity in technology and media, including electronic monitoring and protecting Black dissent. During a Q&A last week with the Coded Bias Twitter account, MediaJustice, which has mapped electronic monitoring hotspots around the country, pointed out the use of surveillance technology, including facial recognition software, to track protestors.

Netflix’s ‘Coded Bias’ Documentary Uncovers Racial Bias in Technology was originally published on newsone.com